Performance Problems are at the top of our “danger” list. Finding the source of a crucial problem can be straight forward, indicative, or simply not known due to the nature of interactions between multiple proprietary systems. Repeated conditions are helpful.

I recall a million dollar sales pitch for a system that hinged on demonstration. The system failed. There were two issues. The developer recompiled the software, omitting one of the modules. This took lengthy and intensive work to identify via senior engineers. The second was a hard disk failure. The logs showed no errors. The hardware recovery architecture showed no faults. All we knew was that a simple Unix “cat” command was returning incorrect results. We immediately replaced the hardware (both disk and connector cable) which solved the problem. The sale was consequently lost. However, it is valid to say the sale may have been lost regardless. Other indicators suggested possible bias, pricing discounts demanded as unrealistic, no real intention to purchase – hence the sales team had its own issues. This is life.

We cannot believe some of what technology tells us, so we take a broader approach, and then have a better ability to see a problem. Problem solving is a real skill. A simple technique is what we call halving the problem. We remove potential causes from a place of initially not knowing anything. Think of it like a faulty Gigabyte data file. Is the problem in the first or second half of the file? This is a better approach than examining the file from the first byte sequentially to the end. It was quite amazing to meet up from time to time with IBM’s problem solving team members, watching them at work first hand. My point is that there is much more involved in analysis and correction than we may realise. What we initially “see” may not be what we think it is.

Unfortunately, many hep desk calls are initiated with low level skilled staff. A number of steps are carried out from a script, but we may know this will lead nowhere. For example, have we upgraded the software to the latest version? Help Desk staff can go off on terrible tangents or simply not understand what we are saying. Good, professional centers will have higher tiered staff who can come into the picture to solve a problem. Even then, it can be hazy. For example, a provider may not have deleted an old configuration that blocks a function. They may insist it is not their fault. Then months later you get hold of someone else and they know straight away the problem is on their side, not yours – even though you knew that. This is how IT works.

Competition Performance Quality

cPanel services use LiteSpeed for the most efficient website performance possible. That is, page display. However, does performance address editing pages, multi-domains, potential points of failure such as a database connected to another country, or variable demands on shared infrastructure with shared IP4 addresses, etc.

Advantage Web has installed and currently maintains OpenLiteSpeed on Linux, but the preference is for Nginx with memcached memory management to achieve highest performance, or use of Apache2 (AWS httpd) when an application requires it. For example, if a client’s app has dependencies on a prior PHP version.

Page display is supported by caching, optimisations such as Expire Headers, Gzip etc., critical CSS, and CDN where required for International geographies. A bare-bones WordPress installation needs these configured.

The end result is a page displaying virtually at the click of a mouse on a desktop, and certainly instant display on a re-click.

As we dive deeper into the website’s backbone, we find these things in place, or limitations, even absence.

WordPress Theme Complexity

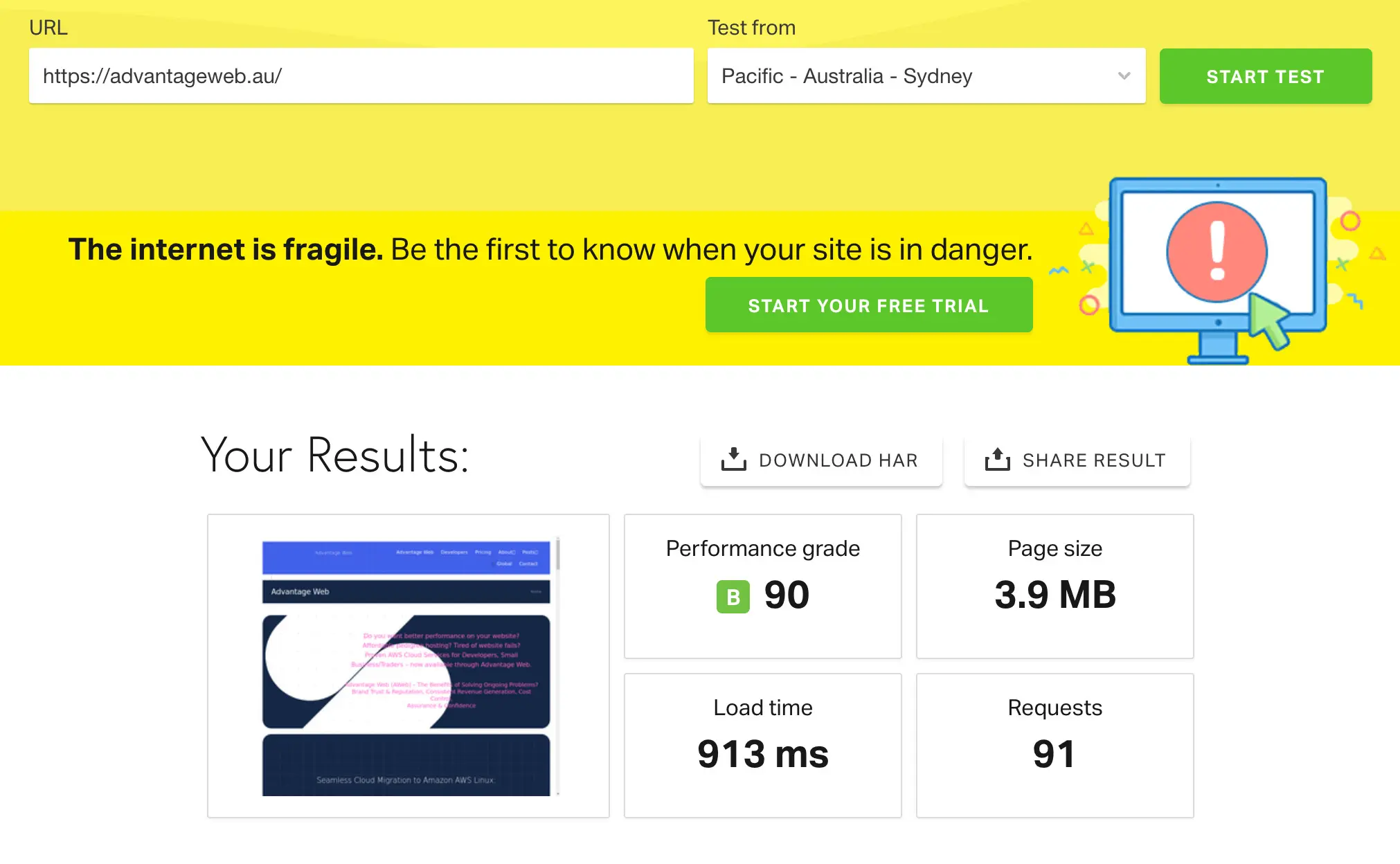

We would not expect to rate at 90% or higher on various WordPress 3rd Party themes. Here we see a 90% rating on Avada, using all tricks of the trade on a heavily loaded front page. The web page images have not been fully compressed and use larger than recommended image sizes in order to keep a higher level of detail, contrast, black and whites. The Avada theme’s structure determines what can be minimised or not. The LOAD TIME is virtually instant. Additionally this is not the primary domain, meaning display time is slightly less than its potential. In this example, most ratings in the test are 100%, with the main compromise shown in the amount of coding on the home page: “Make fewer HTTP requests” – which we cannot reduce. It is an excellent result. The page is a little faster if we use .webp images instead.

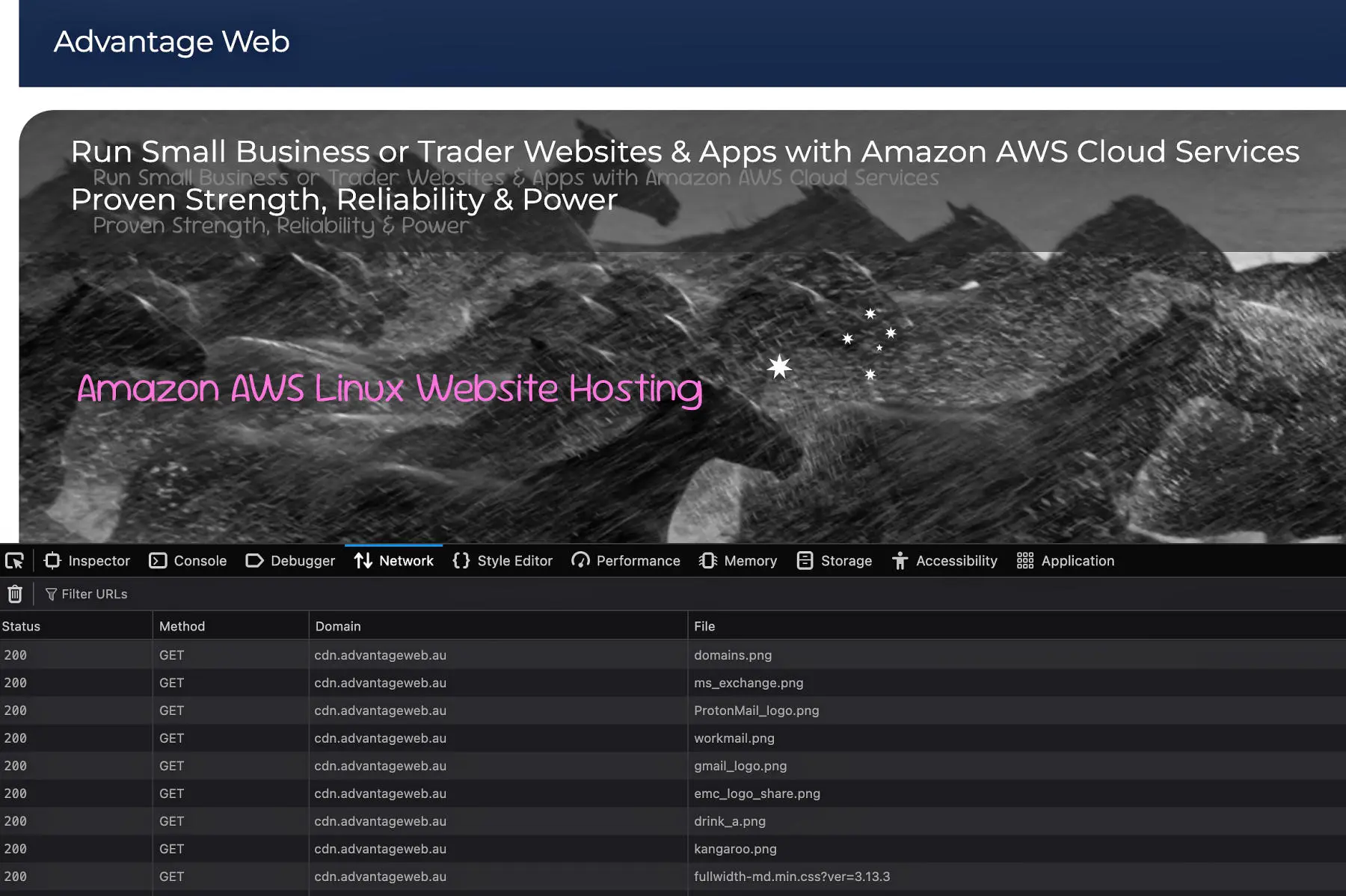

Here is a network inspection showing the use of a CDN, content delivery network via Amazon CloudFront:

PINGDOM Speed Test – Load Time Variations

Why is it a complex home page with CDN can one moment load in 788ms, and later at 1.78s or 5s, with this kind of variation worldwide?

A reasonable view is that Amazon CDN can slow down a site’s page display, and has been known for slow performance. The Amazon CDN architectural details are privy to Amazon, as expected, but appears to have performance issues.

The trade-off is that the service is super cheap.

In the IT industry we can only spend so much time and dive so deep on skills and knowledge of any system. For example, it is just not possible to know the ins and outs of the Apache .htaccess file configurations. We have to choose what we study and how much time to invest. Similarly we have to decide what we will not study.

We can at least rely on generally accepted configurations, as per the many forums or articles available.

Why is this so difficult? Surely configurations should be clearly defined and available to the public? This is not the case. When I worked in IBM Australia, I had access to detailed technical documents from Boulder labs. No one in Australia had this level of detail. I assume strongly this is the case with many IT systems. Some knowledge was only enhanced via paid course work in other countries. For example, the retrieval of past bank statements using IBM OnDemand software is astonishingly excellent, reflecting key and brilliant minds behind the inventions we use.

Another is the Dovecot e-mail service. The amount of content and components to cover is way too large. Official documentation shows syntax, not use cases, examples or sufficient explanations. This means the detailed knowledge is known by the developers, and in this case, a specific 3rd party company that configures the service correctly.

What we consistently find are variations in recommendations from forums and articles, examples relating to one person’s experience and system, even bad information we should not use.

A further example is the use of iptables. Why is there no official document that shows how to use it, best use cases, what it really means. We do not find such documentation.

We therefore work within the scope of what technology provides and make choices about how it fits in practice, leaning on experience and an understanding of best practice principles. Over the years we develop a sense of what works, and where to be cautious or put our foot down. For instance, if a particular software package continues to fail, showing breakages in all sorts of areas, we throw it away rather than wishing it may be better. Many people do not learn these lessons or develop this real-life, practical based intuition.

We also take care about where a problem is actually coming from. We may think we know the cause, only to find out it is elsewhere. For instance, why does Google Tag Manager work well one day, and another day take several seconds to execute, thus blocking our web page for valuable seconds? These are the things we look at. Is it a DNS failure? Is it a Google failure? Is it something odd on the hosting platform? Is it the CDN? Is it our broadband provider? Hence the caution and why we monitor over a period of time to determine if we have an unacceptable problem.