What is a CDN?

During our IT research or development we choose which areas to specialise in, what level of learning to apply, or where to accept architectures and details so we can focus on applying them.

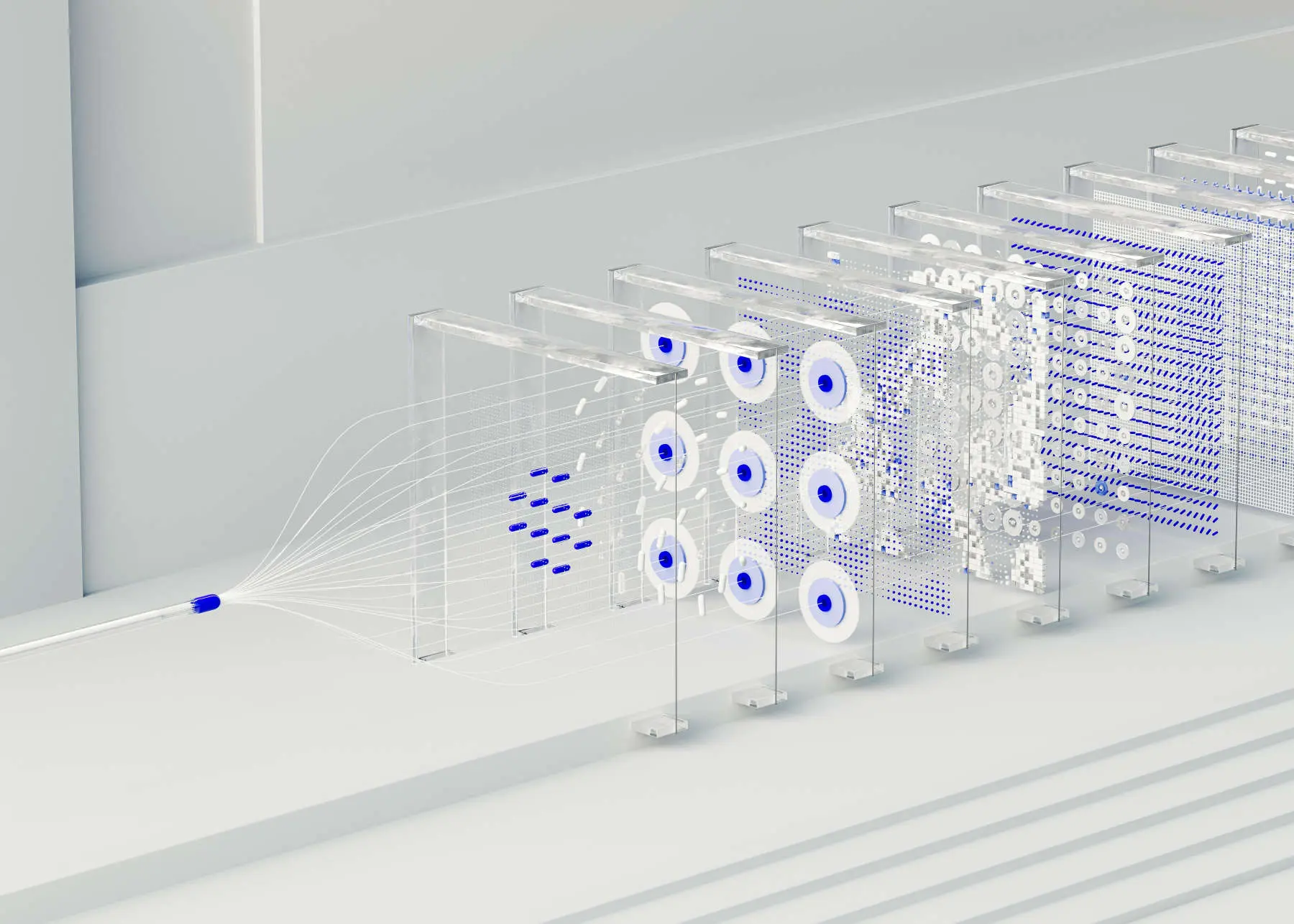

When we say “CDN” we have a notion of mysterious files being distributed around the world so that a web server in Sydney is not slow in London. This is not exactly how it works.

A CDN does use data centers, but to store cached objects with an expiration time, usually 1 year for normal industry practice – TTL. A cache does not store all the files. When a website visitor in London accesses my photography on Brisbane River, behind the scenes the question is if the closest data center to London has the images cached. If not, they are retrieved from the authoritative server in Sydney – e.g. my S3 Bucket is the source of truth.

But it does not end there. Other factors determine if the object can be served or not, or how long it stays in cache. These are the details we do not know about, such as policies, caching pressure, how stale an object is, has the object been accessed again or not and so on. Otherwise caching would be hugely overloaded.

When we “invalidate” an image file, regardless of the directory structure, each file is called a “path”. If I create a number of files in WordPress for sizes such as 300×300, 1024, and so on, these are part of the count. AWS services on a free CDN plan (you can have up to three distributions free) charges $0 for up to 1,000 invalidation “paths” per month.

Why invalidate? WordPress automatically uploads an image of the same filename with a suffix. e.g. logo.png and logo-1.png if the same file is uploaded again. We don’t really need to worry about the two files in the CDN, unless we are a large company with huge numbers of files, where a rethink of our CND practices could be warranted.

If we delete logo.png before uploading it again, we will of course have the name logo.png in the Media Library (and CDN), not logo-1.png. If the filename is the same, but the image is different (perhaps we edited it), the CDN “may” have a cached copy somewhere if someone has called it, including ourselves in Sydney.

A clean way to fix this potential problem is to invalidate that exact file (and/or its related smaller sizes). In my CDN php code, I invalidate files when they are actually deleted from the Media Library. It will not cause an issue on cost for the scale of my work.

Huge files, such as original photographs, as is in my case, are not stored in the CDN, rather on a mounted S3 bucket using the mount-s3 AWS capability. These can be referenced with links for others to download, while the smaller sized web browser images happily server from the CDN. (I noted that the new S3 Bucket filesystem service failed on my testing, and it is actually related to more complex use of the EFS filesystem. It also forced use of bucket versioning. I can’t see why S3 Bucket mounting is not the best way to go.)

There is little value in having a complex CDN, so I do not use a CDN plugin, and I do not cache .css and .js files. This assists speed of delivery. Plugins can be very complex and cause slower response times depending on what they are doing. For instance, if using the AWS CDN outside of Amazon on another platform, it may slow down too much when using a CDN plugin – but of course, this is up to one’s own testing. In this case, only using a custom PHP script to serve Media Library images from the CDN removes delays. I also noted with plugins, that speed improved if the services are only on AWS, but that should not be the case without a plugin.

Invalidation gives us a change of state, whereby the cached object is marked as invalid, and therefore not used. It is not an immediate “deletion”. Other processes do the housekeeping as per their own design.

Some WordPress themes produce huge lists of file sizes, which I find frankly ridiculous. I include in my scripting allowable defined sizes, end of story. All images served in a responsive WordPress theme are scaled at time of display anyway. If an optimiser lowers our performance score, so what? We determine what we wish to do, not other tools. For example, if we reduce photographic images below 1800 pixels, browsers reduce the quality we see – but a tool may disagree with us. What we can do is work with .webp conversions, and take care around the percentage reductions used, and use lossless conversions for png files.

After invalidation, a browser may still need to clear its own cache.

CDN’s are like “a predictive distributed cache that learns what people use”. They are a temporary “thing” near users. To avoid costs, we do not invalidate with wildcards, and ensure we keep within the free plan limits.

If a file is not cached, an invalidation request costs the same, relating to network and API calls, not costed according to a cache being in London or Singapore. We pay for the instruction to invalidate. Objects that are actually cached are stopped from serving on their next call. This stops expensive “file” deletions (do not think of cache as files) and keeps the edge systems fast and simplified. The caching does not hop around the world to each data center working out if it has to delete or cache a file. Metadata keeps track of what to do, and housekeeping takes care of cache. Invalidation is an instruction. Bandwidth should practically, not be our concern.

My own CDN scripting makes use of an IAM EC2 attached Role with various secured policies. However, an external system such as Amakai/Linode needs to use the AWS CLI and credential keys.